Introduction

Manufacturing defects, equipment failures, and regulatory violations stem from a single cause: improper humidity control. In electronics assembly, RH levels above 60% accelerate moisture absorption leading to "popcorning" and solder joint defects, while levels below 30% dramatically increase electrostatic discharge (ESD) damage risk.

For power generation facilities, increasing fuel moisture from 20% to 50% can slash boiler efficiency by 8-10% and reduce steam generation by 20%.

A hygrometer measures relative humidity—the percentage of water vapor present in air compared to the maximum the air can hold at a given temperature.

For industrial processes operating in extreme environments, selecting the right hygrometer technology isn't just about accuracy—it's about maintaining product quality, process efficiency, regulatory compliance, and profitability.

TLDR:

- Different hygrometer technologies suit specific industrial environments and temperature ranges

- Improper humidity control causes defects, corrosion, and safety risks in manufacturing

- Chilled-mirror hygrometers serve as NIST reference standards with ±0.04°C dew point accuracy

- High-temperature industrial processes require specialized analyzers capable of operating above 1200°F

- Regular calibration every 6-12 months prevents sensor drift and maintains accuracy

What is Relative Humidity and Why Accurate Measurement Matters

For industrial facilities, controlling moisture means controlling product quality, energy costs, and regulatory compliance. Relative humidity (RH) measures the ratio of water vapor currently in the air to the maximum amount the air can hold at a specific temperature and pressure.

ASHRAE defines RH as the "ratio of the mole fraction of water vapor to the mole fraction of water vapor saturated at the same temperature and barometric pressure."

The Temperature-Dependent Nature of RH

The critical characteristic of relative humidity is its temperature dependence. Warm air holds significantly more moisture than cold air—a rise in air temperature of just 1°C causes a decrease in relative humidity of approximately 3% at 50% RH.

This relationship means that industrial hygrometers must measure temperature alongside humidity to provide meaningful data.

Above 212°F, relative humidity becomes increasingly limited as a measurement scale. At 400°F, the maximum possible RH is only 5.9%, and at 700°F, it drops to just 0.48%.

This makes RH "a useless and even misleading scale for indicating moisture control level above 212°F." For high-temperature industrial processes, absolute humidity or moisture by volume percentage provides more useful measurements.

Industrial Impact of Humidity Control

Understanding these temperature relationships explains why accurate measurement matters so critically in industrial settings.

Manufacturing Defects and Product Quality:

In printed circuit board assembly, humidity levels directly correlate to defect rates. RH above 60% speeds up moisture absorption, causing delamination during reflow soldering.

Conversely, RH below 30% significantly increases ESD risk, with the probability of voltages exceeding 8 kV being significantly higher at 15% RH compared to 50% RH.

Atmospheric corrosion rates for metals like aluminum increase exponentially when RH exceeds 50-60%, as higher humidity creates thicker nanoscale water films on metal surfaces that accelerate oxidation.

Process Efficiency and Energy Consumption:

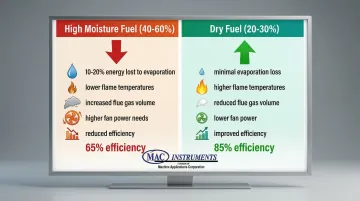

In power generation, fuel moisture content directly impacts efficiency. High moisture content in biomass fuel (40-60%) consumes 10-20% of the fuel's energy just for evaporation.

Drier fuel produces higher adiabatic flame temperatures, reduced flue gas volumes, and lower fan power requirements.

Regulatory Compliance Requirements:

Industries face strict regulatory requirements for humidity control:

- Pharmaceutical facilities must comply with FDA 21 CFR 211.42(c)(10)(ii), which mandates temperature and humidity controls in aseptic processing areas to prevent microbial growth and static discharge

- Food processing facilities operating under FSMA (21 CFR Part 117) must evaluate environmental pathogens as hazards and implement environmental monitoring as a verification activity

- NIST-traceable calibration chains measurement accuracy back to primary standards, providing the foundation for regulatory compliance

Types of Hygrometers: How Different Instruments Measure Humidity

Mechanical Hygrometers

Hair tension and metal-paper coil hygrometers use materials that expand or contract with humidity changes.

These simple, power-free instruments have significant limitations in industrial applications:

- Accuracy drift over time requiring frequent recalibration

- Limited durability in harsh environments

- Slow response times (often minutes to hours)

- Susceptibility to contamination and mechanical wear

Psychrometers (Wet and Dry Bulb)

Psychrometers compare temperatures between a dry thermometer and a wet thermometer cooled by evaporation, with the difference determining relative humidity.

Advantages: Reliable for field measurements, no electronic components to fail, well-established calculation methods

Limitations: Requires water supply and wick maintenance, impractical for automated continuous monitoring, limited accuracy in high-temperature environments

Capacitive and Resistive Hygrometers

Modern electronic sensors measure humidity through changes in electrical properties.

Capacitive sensors detect dielectric constant changes as a polymer film absorbs moisture. Resistive sensors measure conductivity changes instead.

Key specifications include:

| Sensor Type | Typical Accuracy | Response Time | Operating Range |

|---|---|---|---|

| Capacitive (HUMICAP®) | ±1.7% RH | 8-40 seconds | -40°C to +80°C |

| Capacitive (HC1000) | ±0.95% RH | <15 seconds | -40°C to +180°C |

| Resistive Polymer | ±2-3% RH | 15-60 seconds | -20°C to +60°C |

Capacitive sensors generally offer faster response times and better accuracy than resistive types. However, harsh environments require protective filters that can slow response.

Chilled Mirror Dew Point Hygrometers

Chilled-mirror hygrometers serve as reference standards at NIST, measuring the temperature at which condensation forms on a mirror—a fundamental measurement of moisture content. These instruments offer expanded uncertainties as low as 0.04°C for dew points between 0°C and 85°C.

Applications: Calibration laboratories, precision environmental chambers, pharmaceutical manufacturing, semiconductor fabrication

Limitations: Higher cost ($5,000-$50,000), slower response times, require clean environments, not suitable for continuous process monitoring in harsh conditions

High-Temperature Industrial Moisture Analyzers

Standard hygrometers fail above 120°F due to sensor limitations and the physics of relative humidity at elevated temperatures. Specialized high-temperature moisture analyzers use solid-state sensor technology to measure absolute humidity and moisture by volume in extreme environments.

MAC Instruments manufactures moisture analyzers that operate in extreme conditions:

- Temperature range up to 1200°F/650°C (optional 2400°F/1300°C)

- NIST-traceable accuracy of ±1% of full scale

- Patented solid-state sensor technology

- No chemicals, wet bulb techniques, compressed air, or optical systems required

- Weatherproof construction for indoor or outdoor installation

This design eliminates common failure points in harsh industrial environments like power generation, cement production, and metal processing facilities.

Industrial Applications: Where Hygrometers Are Critical

Power Generation and Energy Production

Humidity monitoring in combustion processes directly impacts efficiency and emissions. Moisture content in fuel determines how much energy is consumed for evaporation versus productive combustion.

Drier fuel results in:

- Higher adiabatic flame temperatures

- Reduced flue gas volumes, allowing smaller boiler sizing

- Lower fan power requirements

- Improved combustion efficiency

Continuous stack emission monitoring requires moisture measurement for EPA compliance under CFR parts 60 and 75. Analyzers must withstand extreme stack gas temperatures—some reaching 1200°F or higher—while maintaining measurement accuracy for regulatory reporting. Industrial moisture measurement systems designed for these demanding conditions deliver the precision required for emissions compliance.

Manufacturing and Processing Industries

Moisture control impacts product quality and energy efficiency across multiple heavy industries.

Cement Production:

Kiln atmosphere humidity affects clinker quality and energy consumption. Precise moisture control ensures optimal chemical reactions during calcination while minimizing fuel waste.

Metal Processing:

Furnace atmosphere humidity control prevents oxidation and decarburization during heat treatment. Key specifications mandate rigorous atmosphere controls:

- SAE AMS 2759 standards for heat treatment processes

- API specifications for critical metal processing

- Continuous humidity monitoring in annealing, hardening, and stress-relieving operations

Chemical Manufacturing:

The deliquescence relative humidity (DRH)—the threshold where salts absorb moisture and dissolve—represents a critical control point. For NaCl production, maintaining humidity below the DRH prevents premature dissolution, directly impacting downstream chlorine and caustic soda production.

Food and Beverage Industry

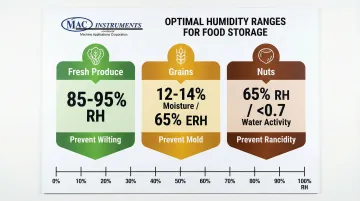

Optimal humidity ranges vary significantly by product category:

| Product Category | Optimal RH/Moisture Target | Purpose |

|---|---|---|

| Fresh Produce | 85-95% RH | Prevent wilting, extend shelf life |

| Grains | 12-14% moisture (~65% ERH) | Prevent mold growth, maintain quality |

| Nuts | 65% RH (Water Activity <0.7) | Prevent rancidity, ensure crispness |

Continuous conveyor ovens for meat and poultry cooking require steam injection control to maintain optimal moisture levels.

Specialized moisture analyzers for food processing feature NEMA 4X/IP66 waterproof construction and sanitary mounting systems, remaining operational during clean-in-place procedures.

Pulp, Paper, and Textile Production

TAPPI T 402 specifies standard conditioning at 50% RH and 23°C for paper products, while ISO 139 requires 65% RH at 20°C for textiles. Moisture content directly affects material properties:

- Paper tensile strength varies by 2-3% for each 1% change in moisture content

- Humidity fluctuations cause expansion and contraction, leading to registration problems in printing

- Proper moisture levels prevent static buildup and web breaks during high-speed processing

Factors Affecting Hygrometer Accuracy and Reliability

Temperature variations create measurement challenges beyond the inherent temperature-dependence of RH. Thermal gradients within measurement chambers cause localized condensation or evaporation, skewing readings.

Sensor placement must account for airflow patterns, avoiding dead zones or high-velocity areas that don't represent average conditions. Beyond temperature, several environmental factors directly impact measurement reliability.

Industrial environments expose sensors to chemical vapors, dust, and corrosive gases that accelerate drift. Common contamination effects include:

- Chemical vapors offsetting capacitive sensor readings

- Surface deposits changing resistive sensor conductivity

- Particulate buildup blocking vapor access to sensing elements

Manufacturers provide protective filters and chemical purge options—heating the sensor to drive off volatiles—to mitigate contamination effects.

Industrial hygrometers drift due to sensor aging and environmental exposure. NPL recommends calibrating RH probes every 6-12 months depending on environment harshness and measurement criticality.

Reference instruments like chilled-mirror hygrometers typically require calibration every 1-2 years.

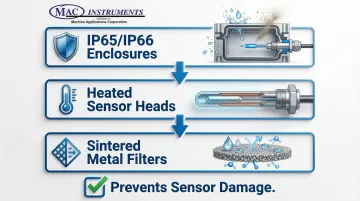

When process temperatures drop below the dew point, condensation forms on sensors causing erratic readings or permanent damage. Protection strategies include:

- IP65/IP66 rated enclosures for harsh environments

- Heated sensor heads preventing condensation in variable-temperature applications

- Sintered metal filters protecting against liquid water ingress while maintaining vapor permeability

How to Choose the Right Hygrometer for Your Application

Selecting the right hygrometer requires matching instrument capabilities to your specific process conditions and accuracy needs. Consider these critical factors to ensure reliable performance and compliance.

Matching Measurement Range to Your Process

The measurement range must cover your operating conditions with adequate buffer for process variations:

| Application Type | RH Range | Temperature Range |

|---|---|---|

| HVAC and General Industrial | 0-100% RH | -40°C to +80°C |

| Drying Processes | Low dew points | -100°C to -40°C |

| High-Temperature Processes | Absolute humidity | Up to 1200°F+ |

Accuracy Standards by Industry

Different industries demand varying precision levels based on product quality requirements and regulatory compliance:

| Application | Required Accuracy |

|---|---|

| General industrial monitoring | ±2-3% RH |

| Quality-critical processes | ±1% RH |

| Pharmaceutical/semiconductor | ±0.8% RH or ±0.04°C dew point |

| Regulatory compliance | NIST-traceable with documented calibration |

Response Time Considerations

Spot-checking applications tolerate slower response times of 60-120 seconds. Process control loops require faster updates, typically 10-30 seconds, to maintain tight tolerances.

Filter protection slows sensor response. Balance your need for contamination protection against control speed requirements for your specific application.

Environmental Protection Requirements

Match environmental protection to your installation conditions:

- Select sensors rated for your process temperature extremes

- Specify chemical-resistant materials and protective filters for corrosive atmospheres

- Outdoor installations need NEMA 4X/IP65 rating minimum (weatherproof enclosures), with heated enclosures for freezing conditions

- Dusty environments benefit from sintered metal filters with optional blow-back cleaning systems

For high-temperature applications above 650°C, specialized moisture analyzers like those from MAC Instruments provide accurate absolute humidity measurement in extreme process conditions.

NIST Traceability and Calibration

Regulated industries including pharmaceutical, food processing, and aerospace require calibration certificates traceable to NIST standards. Verify your supplier provides ISO 17025 accredited calibration or direct NIST traceability documentation.

Built-in calibration systems simplify ongoing compliance by enabling field verification without sending instruments offsite for recalibration.

Frequently Asked Questions

What is the instrument used to measure relative humidity?

Hygrometers measure relative humidity. Main types include capacitive/resistive electronic sensors for industrial use, chilled-mirror hygrometers for reference standards, psychrometers for field work, and specialized high-temperature moisture analyzers for processes above 212°F.

Is 70% humidity considered high humidity?

70% RH is moderately high for indoor spaces. ASHRAE Standard 62.1 recommends below 65% in occupied areas to reduce microbial growth. Context matters—produce storage needs 85-95% RH, while electronics manufacturing targets 30-50% RH.

What is the difference between relative humidity and dew point?

Relative humidity is temperature-dependent—the percentage of maximum moisture air can hold at that temperature. Dew point is absolute—the temperature where condensation forms. Dew point remains constant with temperature changes, making it more useful for high-temperature processes.

How often should industrial hygrometers be calibrated?

Industrial RH sensors need calibration every 6-12 months, with harsh environments requiring more frequent checks. Reference standards extend to 1-2 year intervals. NIST recommends establishing intervals based on historical stability data and drift trends.

Can standard hygrometers work in high-temperature industrial processes?

No. Standard hygrometers fail above 120°F due to sensor limitations. Above 212°F, RH measurements become impractical as maximum possible RH drops dramatically. High-temperature processes require specialized moisture analyzers capable of operating at 1200°F or higher.

What accuracy level do I need for my application?

Match accuracy to application criticality: ±2-3% RH for general HVAC and industrial monitoring, ±1% RH for quality-critical manufacturing, and ±0.8% RH or better for pharmaceutical and semiconductor applications. For regulatory compliance, prioritize NIST-traceable calibration over absolute accuracy.

Selecting the right hygrometer technology ensures your industrial processes maintain the precise humidity control required for product quality, energy efficiency, and regulatory compliance. For extreme environments, MAC Instruments manufactures specialized moisture analyzers operating at temperatures up to 1200°F (with optional 2400°F capability) where standard instruments fail, delivering NIST-traceable accuracy for demanding industrial applications.